Your AI output keeps disappointing you. Here's why.

The Sam Altman quote that explains it.

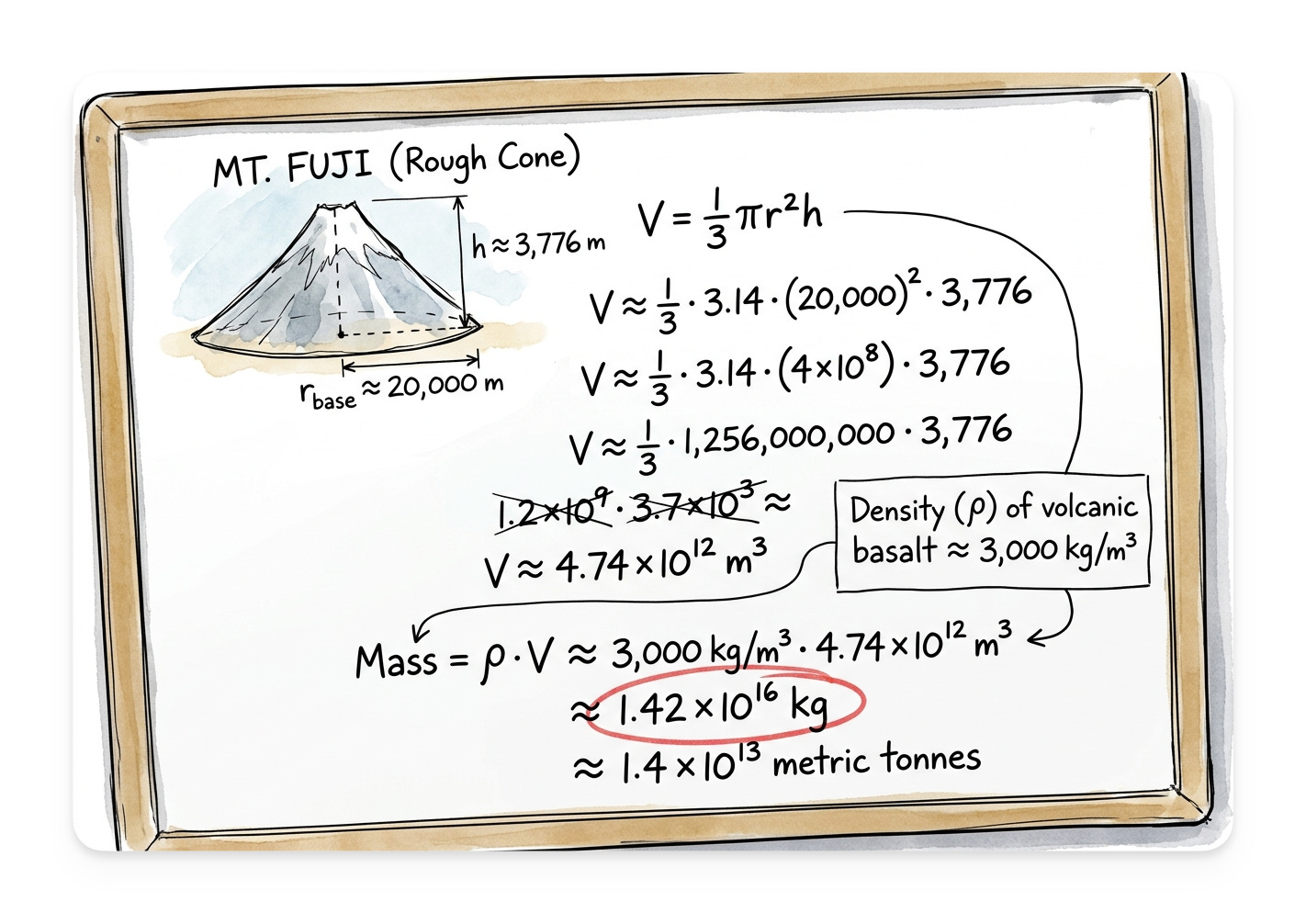

In the late 1990s, if you wanted a job at Microsoft, you sat across from someone who asked you how you would figure out how much Mount Fuji weighed. The riddles weren’t really about Mount Fuji.

They were a proxy for the skill Microsoft thought it was hiring for at the time, which was the ability to reason through a problem you’d never seen before with no resources except your own head.

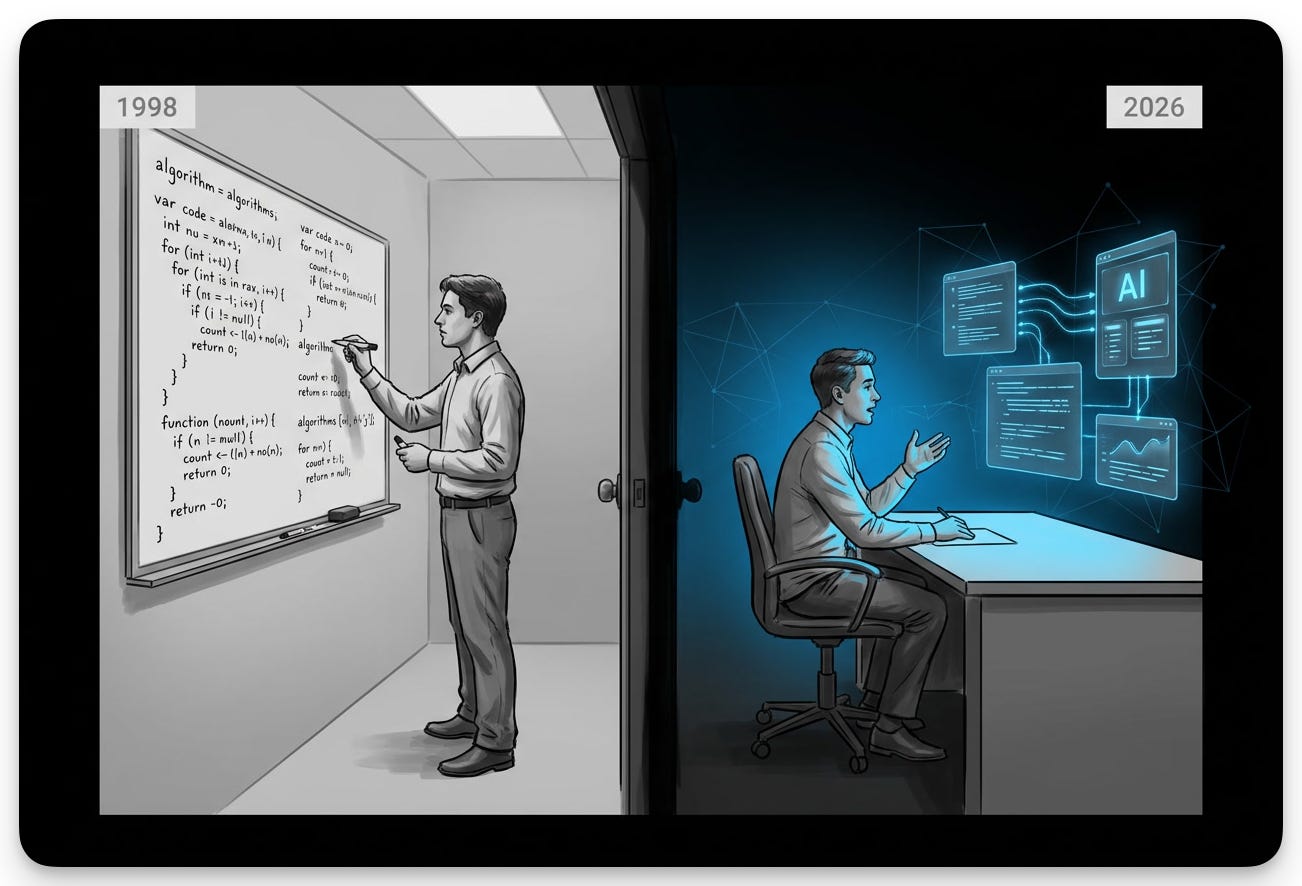

Google’s interviews famously took the same idea further. Candidates wrote algorithms on a whiteboard while three engineers watched them reason out loud. McKinsey ran case studies. Investment banks ran modeling tests.

Every one of those interview formats was a bet about what the company actually needed from a new hire... encoded as a test you could either pass or fail in a small room with the door closed.

Tests changed when the work changed.

The whiteboard test stuck around for thirty years because writing code by hand while reasoning out loud was a reasonable proxy for the actual job: thinking on your feet while producing. The case study stuck around at McKinsey because the actual job was structured ambiguity. When the work shifted, the tests shifted with it. Sometimes faster than the people taking them realized.

I came across a Sam Altman quote that lands differently once you understand what those tests were really filtering for.

“We basically would like to sit you down with something that would have been impossible for one person to do in two weeks this time last year, and watch them do it in 10 minutes or 20 minutes.”

To compress two weeks of work into twenty minutes, you have to be working alongside AI. The bottleneck shifts to how clearly you can describe what to produce.

OpenAI is changing what they’re testing for. The framing in the press was that they’re “evolving” the process, which is a polite word for what just happened. They’re not testing if you can build something anymore. They’re testing if you can describe what to build clearly enough that AI does it for you. The build follows. The description is what they’re grading.

The company making the most advanced models on earth doesn’t care if you can write the code. They care if you can specify the work.

OpenAI isn’t the point

I know what you’re thinking.

“Max, why should I care? I have zero interest in working for OpenAI.”

I get it. But you’re missing the point.

OpenAI made the shift explicit. If you produce real work with AI, the same filter is on yours.

For instance…

If you’re a writer, the test is whether you can describe the piece you’d write so completely that AI can draft it and you only edit.

If you’re a designer, it’s whether you can describe the layout, the constraints, the brand voice, the user need, in enough specificity that the model produces something you’d actually ship.

If you’re a consultant, it’s whether you can encode your judgment into a prompt that another person could run without you in the room.

I see this recognition starting to surface, even when people can’t quite name it yet.

Out of all the workshops I run, the most-requested format is the same every time:

“I just want to watch you build live and explain why you’re doing things.”

People who’ve worked with AI long enough know there’s something deeper going on than just better prompts. They want to see how I think.

All the attention is on the machines. Better prompts, better tools, better models. The gap is somewhere else entirely. It’s THE gap that separates the top 5-10% of AI users from everyone else.

This is the new core skill and what people want to watch when I run a workshop.

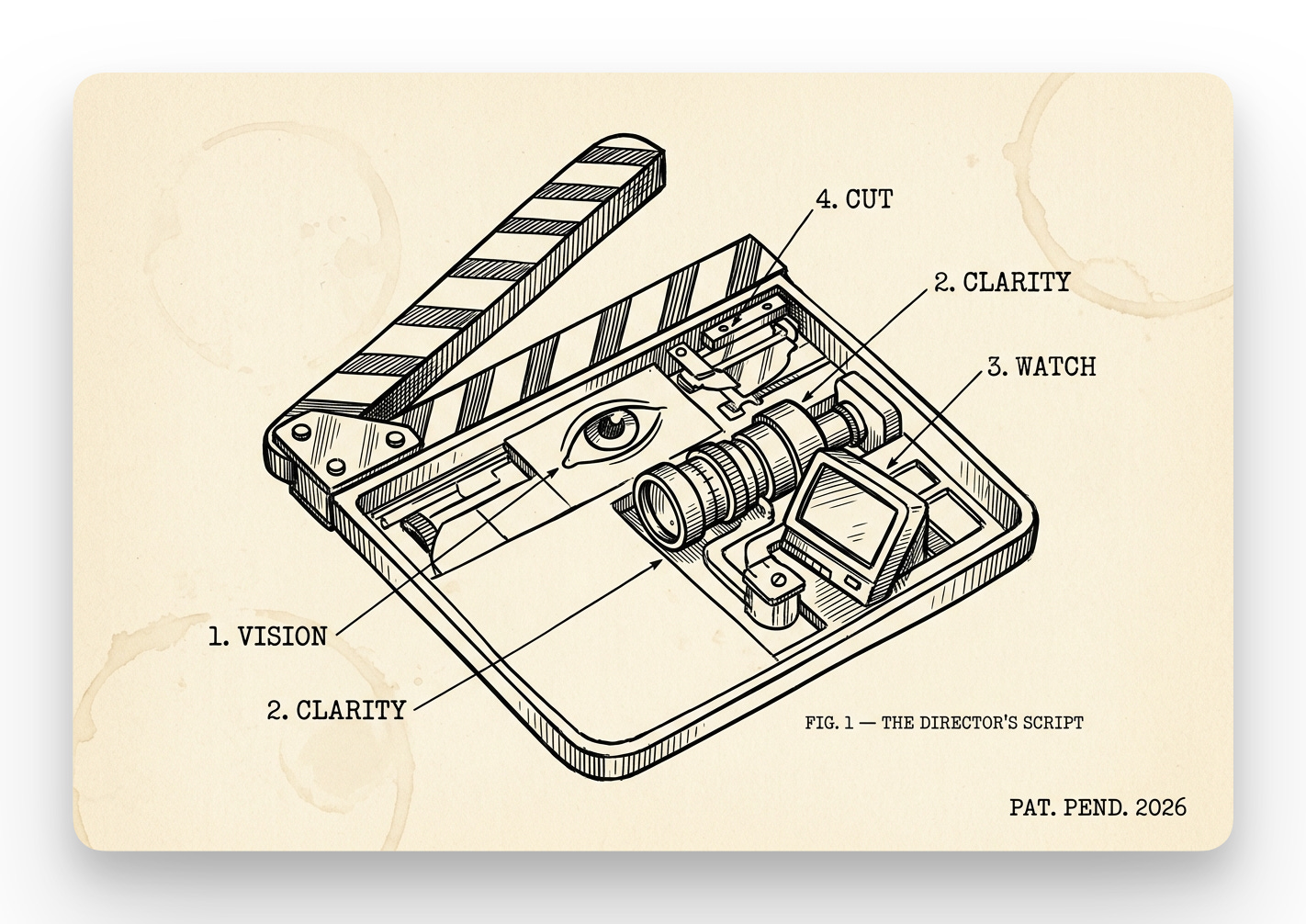

Directing.

How directing actually works

Film directors hold a vision, communicate it, watch what gets made, and call cut when it misses. With AI, it’s the same skill. Knowing what you want clearly enough to describe it. Recognizing when the output drifted. Closing the gap, in real time. The skill that reads as “soft” until you watch someone good at it produce ten times the output of someone with twice the technical training.

Every director develops a signature over time, the moves they reach for and the ones they skip. You already have one. It’s in every prompt you’ve written. You just haven’t read them as a body of work.

I want to give you a receipt for this, because I am the receipt.

Six months ago I had never written a line of code. I presented at a hackathon. I still haven’t written a line of code. I’ve shipped more than fifty custom Claude skills since then, run an autonomous agent system on a Mac Mini in my living room, and ghostwrite a weekly newsletter for a marketing legend whose voice I extracted into a system. None of that required me to learn syntax. All of it required me to learn how to specify, diagnose, and direct.

The signature got built one directed session at a time.

The clearest version of this lives inside one of my retainer clients. Three people on the team I work with closely, three completely different AI installs.

Tom runs sales. He got a system that finds, ranks, and pre-qualifies leads, generates custom outreach, and plugs straight into his email and CRM.

David handles client delivery. His install is a Claude Project loaded with messaging for three different avatars, strategy, and deliverables. It’s wired into the Project Management System (PMS), the calendar, and Fireflies, so every meeting he runs gets processed automatically. Tasks land in the PMS. Client context lands in Google Drive. Claude proactively suggests the next step on whatever deliverable is open.

Sarah runs hiring. Her system sorts the resume inbox, scores candidates against a company rubric, and generates a personal profile card on each person for the leadership team to review.

The reason all three worked isn’t that I knew Python (I absolutely do not). It’s that I sat with each of them long enough to specify what their work actually was, where the friction lived, and what shape an AI assist needed to take to slot in without breaking what already worked. The build was the easy part. The directing was the whole thing.

It’s probably not a coincidence this is where I am focusing. The two things I spend most of my time on are this (AI implementation) and Cognitive Fingerprint™, which is extracting what makes someone unique and articulating it back to them clearly enough that they can use it.

Both are articulation skills and directly fall under…directing. Which is why the test the new interview is filtering for isn’t a tech skill at all.

What this means for you

You also probably suspect, if you’re honest, that the muscle for the new test is underdeveloped. You can prompt. You’ve used the tools. You’ve watched a few people on YouTube spec out a Claude Code build and thought “I could do that,” and then tried it on something real and noticed the model drifted, you didn’t catch it, you cleaned up the output by hand, and you wondered if you’d actually saved any time.

You’re not missing a tool.

You’re not missing a better model.

You’re missing reps at directing.

Reps come from running real work through repeatable patterns.

Knowing that directing is the skill, and seeing your own directing on the page, are two different things.

The AI Director’s Script is the kit. Bring three real prompts you’ve used recently in AI sessions. The kit walks you through each prompt the way a director reads a draft: for vision, clarity, drift, and cut. Then rewrites them as scripts that work without you in the room. By the end, you’ll have three rewritten prompts and your directing signature named: the move you reach for, the move you skip.

Here’s how it works.

🎬 The AI Director’s Script

Every great film starts with a script. Every great AI session does too. This is yours.

How to use this kit

Bring three real prompts. Use whatever’s actually in your AI history this week. The email draft, the analysis request, the plan you sketched, whatever rough thing you typed last. The rougher, the better.

Run the prompt below in any AI assistant. Claude, ChatGPT, Gemini all work, anything that holds a multi-turn conversation. The session takes 15-20 minutes if you stay honest with it.

Expect to be asked what you actually meant. The kit pushes back on vague answers. That pushback is the directing rep happening in real time. The discomfort is the work. If you breeze through, your prompts were probably already in good shape, and you’ll have the rewrites to prove it. If you get stuck, that’s the gap you came here to find.

You’ll walk away with three things. Three rewrites you can paste into your next AI session immediately. Script notes on each prompt, naming what was thin and what got fixed. And your directing signature: the move you reach for, the move you skip, and the habit change that closes the gap.

What it looks like in practice

For example, let’s run the kit on a simple prompt. Take one almost everyone has typed at some point:

“Write me a LinkedIn post about how AI is changing knowledge work.”

The kit walks you through what that prompt is missing.

Vision (what you actually wanted): the prompt doesn’t say. “Thoughtful, not cliché” might be in your head, but the model can’t see it. Without vision, you get the AI default. Generic bullet points about productivity gains.

Clarity (could anyone else have written this prompt?): no audience, no length, no format, no examples of good. A stranger picking up this prompt would produce exactly the bullet-point post you didn’t want.

Watch (where did the output drift?): the whole output was the drift. You wanted a personal angle. You got “automation of routine tasks” and “new opportunities for growth.”

Cut (what would have prevented it?): a sentence naming the audience. A sentence naming what the post should NOT do. A sentence about what shape “good” takes.

The rewrite:

“Write a LinkedIn post for marketing peers who use AI daily but don’t think deeply about it. 200 words, first-person, conversational. Open with one specific moment from this week where I noticed AI changing how I work. Skip the productivity gains and automation framing. Something subtler. No bullets, no buzzwords, no ‘in today’s fast-paced world.’ End with a question that invites disagreement in the comments.”

Your directing signature:

You reach for vision well. You know what you don’t want, even when you can’t name what you do want. You skip clarity hardest. Your prompts assume the model knows your audience and your taste. The habit change: name the audience and one anti-pattern at the start of every prompt. One thing the output should NOT do.