Everyone’s Publishing Principles. Nobody’s Publishing Proof.

A community member asked me last week:

“How do you come up with so many prompts?”

My answer:

“Let me show you.”

I asked him who his last client was. He said a boutique financial company that sells managed futures. I’d never worked in managed futures. Never studied alternative investments. Didn’t know the industry, the players, or the problems.

Seventy-three minutes later I had a five-layer diagnostic framework, a 20-question intake questionnaire, three chained AI prompts, a branding strategy, a direct-response landing page, and a $297 product called The Consultant’s Offer Engine.

With a lead magnet.

With a guarantee philosophy.

With a name that took five rounds of rejection to surface.

Twenty-five prompts from two chat windows in one afternoon.

I recorded the whole thing. Every prompt, every decision, every timestamp and I’ll share the full build log below….But the build log isn’t why I’m writing this.

I’m writing this because three conversations landed on my desk (sounds way cooler than inbox) this week saying the same thing in different words. Nate Jones. Nathaniel Whittemore. And the third wasn’t a conversation I read. It was one I was inside of, a live build session with a room full of people who’d never watched this happen in real time.

My man Keith Bauman needed a product. We had no plan. That session is the build log.

The Part Everyone Gets Right

Nate Jones (the most prolific, quality AI content creator) put it bluntly:

“This is an art you learn by doing. You do not get to learn to ride a horse by reading a book. You do not get to learn to swim by sitting in a deck chair and watching the ocean. You just got to get in.”

Nathaniel Whittemore, on The AI Daily Brief, went further. He’s been building dozens of live projects despite being, in his words, “completely and utterly non-technical.”

His argument…the capabilities everyone says are coming are already here. Right now. For anyone willing to wrestle with them.

And the line that I loved:

“The foundational mindset shift is to stop looking for tutorials or videos or explainers and fully embrace the idea of AI itself as your learning and build partner.”

They’re both right. The problem I am going to talk about below isn’t them. It’s the ecosystem that heard what they said, wrote it in a carousel, and called it a contribution.

They took the mindset and made it a meme.

The Missing Half

Principles are easy to nod at. Hard to picture.

“Start with vision, not task.” Sure. What does that look like at 4:37 on a Tuesday?

“Push back hard and often.” On what? When? How do you know it’s working?

“Dump first, organize later.” What does “dumping” look like when you’re building something you’ve never built before in an industry you’ve never worked in?

The AI learning conversation is full of good advice and almost completely empty of evidence. Lots of principles. Very few build logs.

So I made one. And then I named each move so you could steal it.

I’m not going to show you all twenty-five moves in sequence. A sequence only makes sense in motion. What I can show you is five moves that changed how the build went, and name them well enough that you recognize them when they show up in your own work.

Below are five of them. The full build log has all twenty-five, with universal prompt templates you can run against any industry.

The Moves

Move 1: The Wide Net

This is how I started my session with Claude…

“What are the top 10 biggest top-of-funnel problems that a boutique financial company has that sells managed futures. Don’t ask me other questions just do your best.”

No strategy, no precision. Dump everything, and sort that sucker out later.

The Universal Version: Pick an industry, ask for the top 10 friction points, and tell the AI not to slow down with clarifying questions. You want volume, not accuracy. You can filter later.

That’s “go wide first, react later” in action. Except you can see it happening. You can see the 10 friction points Claude surfaced. You can see the throughline sentence it added on its own at the end. And you can see me grab that sentence and feed it back in the next move.

Move 2: The Thread Pull

I copied Claude’s own best insight and pasted it back:

“Let’s break this apart into micro problems. Let’s look at this from first principles, what are we really trying to solve here?”

The Universal Version: Find the single best sentence in the AI’s output, quote it back, and ask it to decompose that sentence using first principles. You do the selecting. The AI does the expanding.

Whittemore calls this “AI as mirror.”

Nate Jones would call it directing AI with good knowledge toward an outcome.

Move 3: The Landscape Survey

Before building anything, I stopped and asked:

What are the top frameworks for identifying bottlenecks in any industry, and which fits this situation best?

Claude surfaced Goldratt’s Theory of Constraints, the AARRR funnel, value stream mapping. Then told me why none of them were quite right for this use case, and recommended a hybrid of constraint mapping and a buyer journey diagnostic.

The Universal Version: Before you decide what to build, ask the AI to map the intellectual territory. See what already exists. You’ll either borrow the best parts or learn exactly why you need something different. The choice you make here determines the architecture of everything that follows.

Move 4: The Ambition Check

Claude was ready to build. I pumped the brakes. I hadn’t decided what level of ambition this product should have. A named diagnostic? A scoring system? A category claim? That’s a strategic decision, and it has to happen before the tactical one.

The Universal Version: Before you build anything, ask the AI to show you the spectrum of ambition levels for what you’re creating. Then choose. The choice reshapes everything downstream.

Move 7: The Rough Pass.

Nine words:

“Ok just take a stab at the whole system.”

Nobody talks about this one. Perfectionism kills momentum in AI conversations the same way it kills it everywhere else. I didn’t refine the questionnaire for three rounds. I built all four components in one rough pass so I could see the whole machine, then adjust.

The Universal Version: Ask for the complete rough draft of the entire system before perfecting any single piece. You need to see the whole chain before you can know which link matters most.

Move 14: The Discovered Price.

I said “$297 product” out loud at some point in the session. Not as a decision. More like a placeholder. Claude heard it, built around it, and handed back a full tiered architecture with a lead magnet at the bottom and a $297 core offer at the top. The realization in the room was quieter than a typo story.

We hadn’t decided on a price. We’d been talking for an hour, and nobody had stopped to decide. The price had already been chosen…just not consciously. The architecture that came back was better than anything we would have designed from scratch. The lesson isn’t “make mistakes faster.”

It’s that you’ve already made more decisions than you think. Sometimes you need to see what the AI builds to find out what you actually believe.

Nate Jones:

“You’re actually safer leaning in and going faster than going slower, because slower forces you to constantly think about braking, stopping, and adjusting.”

Move 25: The Rejection Loop

The naming sequence is the part I keep coming back to.

I call it The Rejection Loop in the build log. For five rounds, Claude generated names. I rejected them. Each rejection forced me to articulate what was actually wrong.

“Diagnostic” was wrong because the buyer doesn’t think in diagnostics. “Problem OS” was wrong because they don’t care about problems. They care about having a thing. An offer. Something they can stand behind.

The Principle Underneath the Whole Loop: I hate calling anything a diagnostic or an audit. When you hear those words, it feels like a test. Like homework. Like you have to do something. Put the outcome there instead.

They don’t want the diagnostic. They want what the diagnostic finds. “Offer Engine” isn’t a thing you do, it’s a thing that runs. In the buyer’s mind, that’s a big difference.

“The Consultant’s Offer Engine.” The name came from us but only because the conversation stripped away everything that wasn’t right until the real word surfaced.

In a meeting room, that’s five weeks and a whiteboard covered in sticky notes nobody looks at again.

Nate Jones said there’s no mature state to wait for.

“There’s only a continuously steepening curve, and it’s going to reward folks who can climb early and go fast.”

Whittemore said the capabilities are available now for anyone high-agency enough to work through the rough edges.

The Offer Engine build is what happens when you stop waiting. Zero expertise in the industry in under an hour….

A freakin’ complete product with a business model.

You can sit in the deck chair. Or you can get in the water.

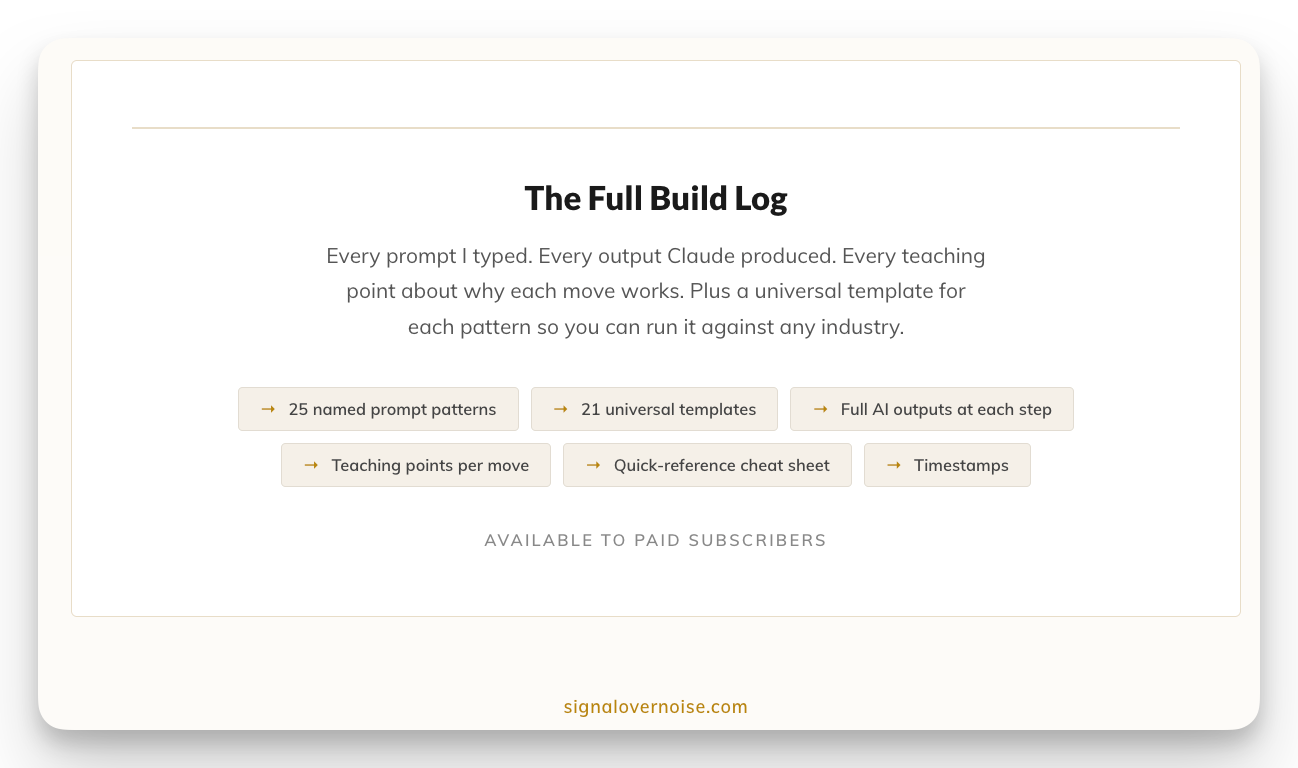

🔒 THE FULL RECONSTRUCTION

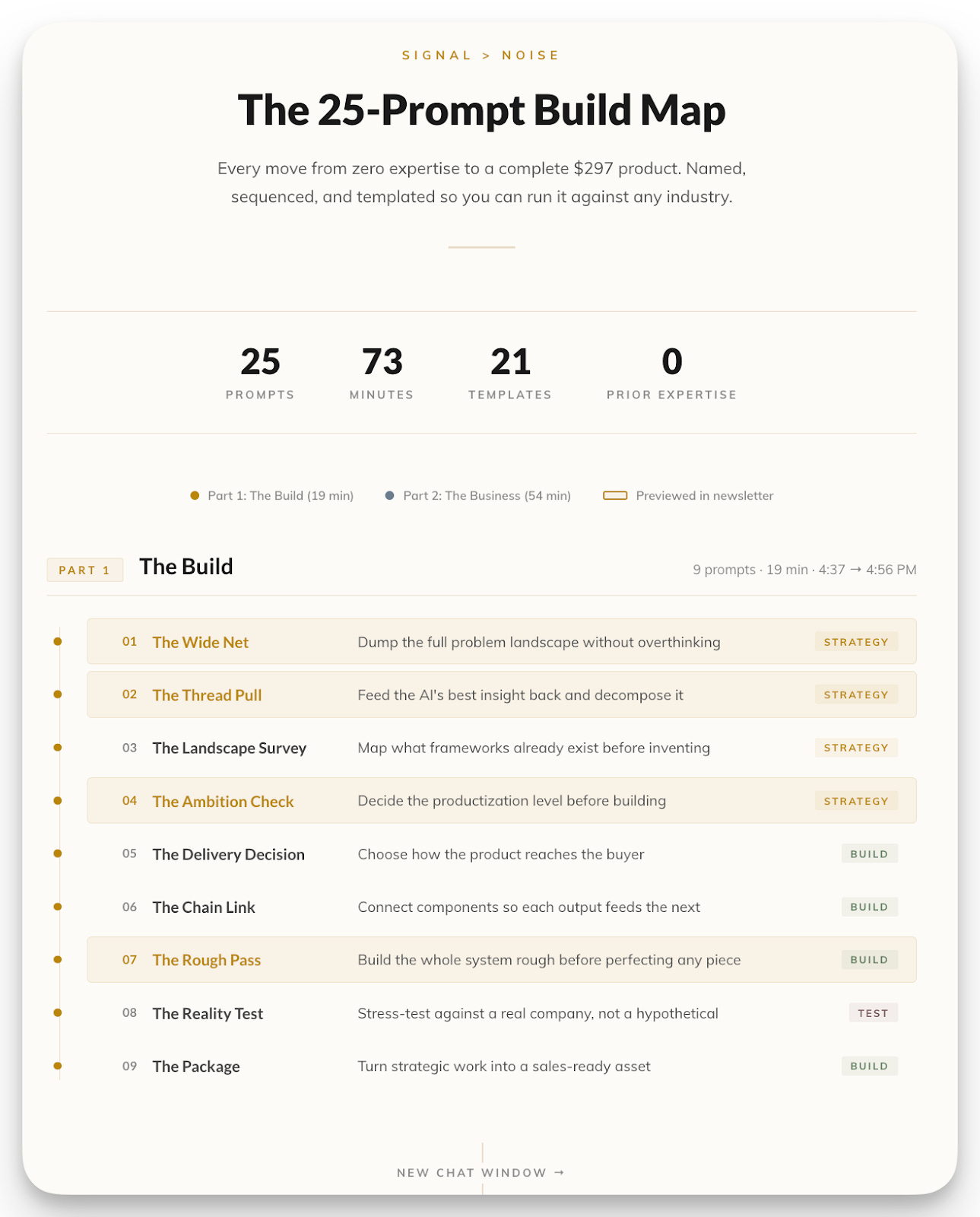

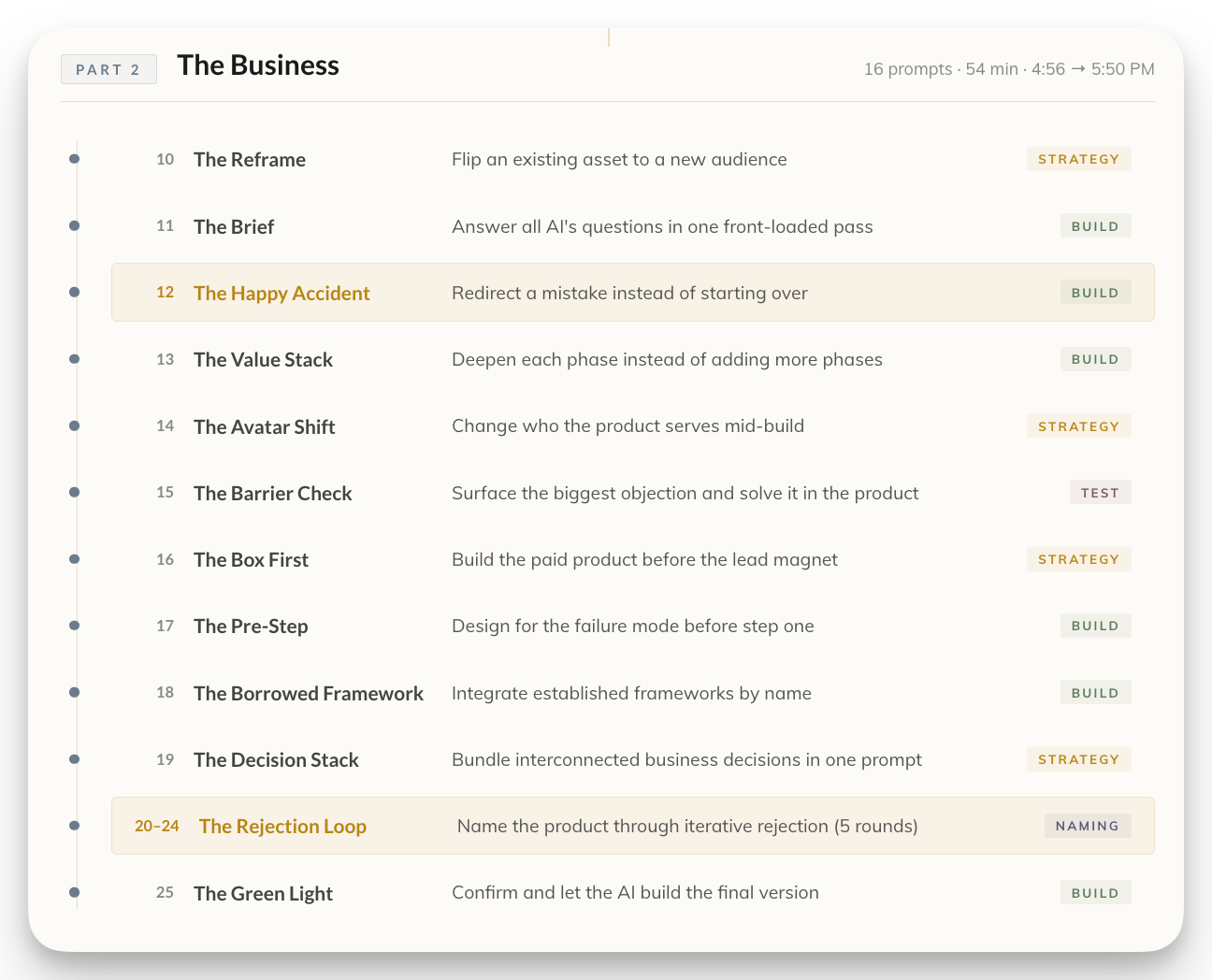

I showed you five of the twenty-five moves. The full reconstruction has the rest, and it’s not a document. It’s an interactive site.

Two parts. Two chat windows. Nineteen minutes to build a complete consulting product. Thirty-nine more to turn it into a $297 business.

Part I is the build. Zero domain expertise, nine prompts, a five-layer diagnostic framework, a 20-question intake questionnaire, and a reusable skill that works against any industry. Part II is the business: how the same session produced a two-tier product ecosystem, 10 Phase Accelerators, a community upsell path, and a complete product blueprint.

Every prompt is there, in order, with timestamps. But the prompts aren’t the point. Each one maps to a named pattern with a universal template you can copy directly from the page. My prompts were about managed futures. The templates work on anything.

The site also includes seven meta-principles extracted from the full 73 minutes. The things you only see when you look at all 25 moves together, not one at a time.

Not principles. A reconstruction. With every move named, every template copyable, and every decision explained.

🔒 THE PROBLEM RADAR

The Consultant’s Offer Engine starts with a problem. Before you can build the engine, you need to know what it runs on.

The Problem Radar is a skill that finds the problem worth building around before you build anything. It searches real communities, runs your idea through three filters, and hands you back the exact language the market uses to describe its own pain.

It also tells you when a problem doesn’t pass. Better to know before you build around the wrong one.